June 15, 2025 · essay

Building an AI-Powered Video Clipper with an AI Co-Developer

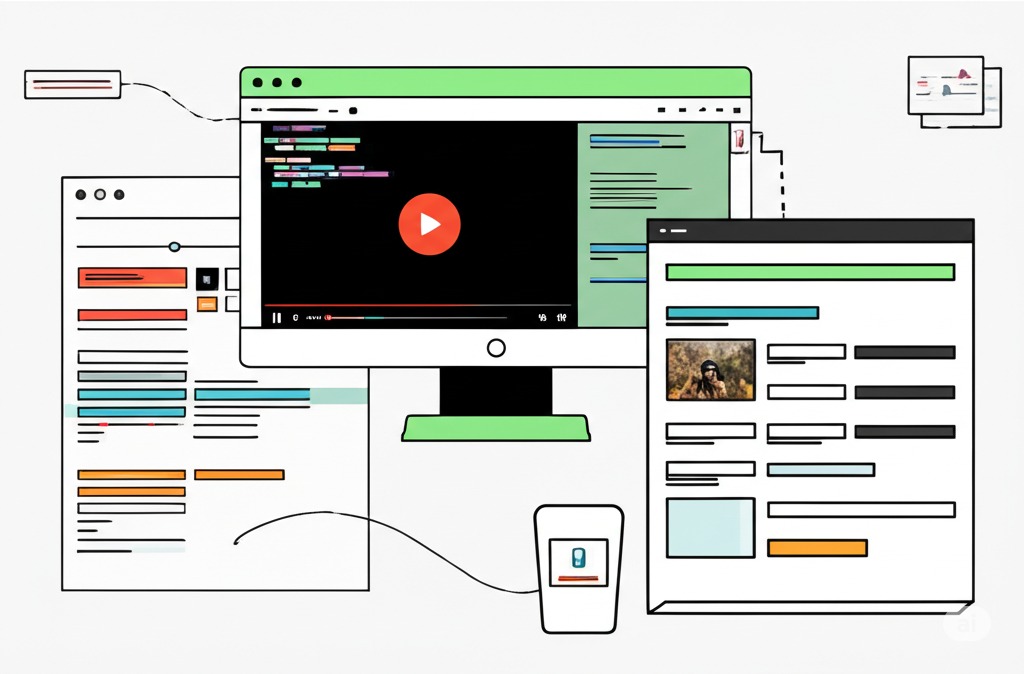

Journey with me as I transformed a Python notebook for AI video clipping into a full-fledged, modular Streamlit application, leveraging an AI partner for 100% of the code refactoring, service creation, and UI development. Discover the power of an AI-First approach to building practical tools!

The short version

- LLM

- Google Gemini 2.5 Pro Preview, acting as the primary software developer, guided by a comprehensive system instruction and iterative architectural decisions from the human orchestrator.

- Why

- To transform a functional Python Jupyter Notebook for AI-powered video clipping into a user-friendly, modular Streamlit web application, enhancing accessibility and maintainability.

- Challenge

- Refactoring a monolithic notebook script into a service-oriented architecture (core, services, orchestrators, UI). Implementing robust error handling, GPU utilization for local Whisper, multiple transcription pathways (YouTube API, Local Whisper, OpenAI API), and ensuring effective LLM prompting for both topic identification and precise segment extraction within user-defined constraints. Managing FFMPEG integration for clip generation within a Streamlit environment.

- Outcome

- A fully functional Streamlit application, `video-clipper-streamlit`, capable of ingesting YouTube URLs or local MP4s, offering flexible transcription, AI-driven topic identification, AI-powered segment extraction based on topics and duration, and automated FFMPEG clip generation with a real-time processing log and downloadable results. The entire refactoring and new code for the Streamlit app were generated by the AI collaborator.

- AI approach

- This project fully embraced an AI-First development philosophy. The AI collaborator (Gemini) was responsible for 100% of the code generation for the new Streamlit application structure, including all Python modules for services, orchestration, core components, and the Streamlit UI in `app.py`. The human developer defined the architecture, provided the logic from the original notebook as a specification, crafted prompts for each module, and iteratively tested and validated the AI's output.

- Learnings

- AI (like Gemini) can effectively execute complex refactoring and full-stack application development when provided with clear architectural blueprints and iterative guidance. Modular design is crucial for managing AI-generated code. Robust FFMPEG path handling and explicit GPU device management are essential for local processing components. Iterative prompt engineering is key to achieving desired LLM outputs for tasks like topic identification and constrained segment extraction. The human role shifts significantly to that of an architect, prompter, and validator in an AI-First workflow.

For a while now, I've been interested in the idea of automatically extracting meaningful, short clips from longer video content. The initial concept started as a Python Jupyter Notebook (clipper.ipynb in my repository), a functional script capable of taking a video, transcribing it, and using Large Language Models (LLMs) to identify and cut segments. While powerful, a notebook isn't the most user-friendly interface for a tool I envisioned being more accessible.

So, today, I embarked on a mission: transform that Python notebook into a fully interactive Streamlit web application. This endeavor was a true exercise in my AI-First development philosophy, where my AI collaborator (Google Gemini 2.5 Pro Preview) was tasked with the entire refactoring and new code generation, all guided by a comprehensive system instruction and my iterative architectural decisions. This project mirrors the rapid re-platforming approach I discussed when transforming a CLI tool into an Electron GUI.

The Vision: An Intelligent, User-Friendly Clipper

The goal was to create an application where users could:

- Input a YouTube URL or upload a local MP4 file.

-

Choose their preferred transcription method:

- YouTube's API (for speed with YouTube videos).

- Local Whisper (for privacy and control, leveraging local GPU if available – a concept I'm passionate about, similar to my explorations with NVIDIA GPUs and Ollama for local AI).

- OpenAI's Whisper API (for potentially highest accuracy via cloud).

- Let an AI analyze the transcript to identify key topics.

- Have another AI extract relevant video segments for each topic, adhering to user-defined duration constraints.

- Automatically generate these clips using FFMPEG.

- Download the resulting snippets with ease.

This required moving beyond a linear script to a modular, service-oriented architecture suitable for a web application, a pattern essential for building more complex systems like my Desktop AI Assistant.

The AI-Driven Refactoring Journey: From Monolith to Micro-Services

The core of this "build" was less about me writing code and more about me architecting the system and prompting my AI partner to implement it. The process involved:

-

Defining the New Architecture: We

decided on a structure with:

- app.py: For the Streamlit UI.

- core/: For Pydantic data models (models.py) and shared constants.py.

- services/: For distinct functionalities (system, video, transcription, LLM, FFMPEG).

- orchestrators/: For pipeline logic (common_steps.py, youtube_pipeline.py, local_mp4_pipeline.py), similar to how n8n orchestrates complex AI workflows.

- utils.py: As a thin facade to the orchestrators for app.py.

- Implementing Each Service: For each service, I provided the AI with the relevant snippets from the old notebook and described the required functions, their inputs, outputs, and error handling. For instance, for transcription_service.py, I'd outline the three methods and prompt for the implementation of each, including the FFMPEG audio extraction step for the OpenAI API.

- Building the Orchestration Layer: The orchestrator functions in utils.py (calling into orchestrators/) were designed to manage the end-to-end flow, deciding which services to call based on user input. This involved careful prompting to ensure correct data handoff between services and robust error propagation.

- Crafting the Streamlit UI (app.py): With the backend logic modularized, the AI then built the Streamlit interface. This included the sidebar for all user configurations, dynamic display areas for processing logs, GPU information, and results, logic to handle user inputs and trigger the processing pipeline, and displaying generated clip information with download buttons. My prompts here focused on layout, widget types, and session state management. The ease of building UIs with Streamlit is a key part of my AI development power stack.

Key Technical Hurdles (and AI-Assisted Solutions):

Throughout this AI-driven build, we encountered and solved several interesting challenges:

- FFMPEG Accessibility in Streamlit: Ensuring ffmpeg.exe (bundled within the video-clipper-streamlit app directory) was consistently found by subprocess calls. The solution involved services/system_service.ensure_ffmpeg_is_accessible().

- Local Whisper on GPU: Getting Local Whisper (via stable-whisper) to correctly utilize the NVIDIA GPU. The AI helped craft debugging logs that pinpointed discrepancies, a process reminiscent of the detailed setup required for running Ollama with NVIDIA GPUs.

- LLM Prompt Engineering for Segment Extraction: Initially, the LLM struggled with strict duration criteria. This required iterative refinement of the prompt in services/llm_service.py, much like optimizing prompts for tools like code2prompt or Context7 to get desired outputs.

- Handling NoneType Errors in Orchestration: Ensuring orchestrator functions gracefully handled potential None returns from services was crucial.

- Python Imports in a Modular Structure: Managing imports across core, services, and orchestrators was a detailed task the AI handled well.

The Outcome: A Functional, Modular AI Tool

The result is the video-clipper-streamlit application we've been working on! It now successfully:

- Accepts YouTube URLs or MP4 uploads.

- Offers three distinct transcription methods.

- Uses LLMs to identify topics and then extract relevant segments.

- Generates downloadable video clips.

- Provides real-time feedback through a processing log.

The modular structure makes it significantly easier to maintain and extend compared to the original monolithic notebook.

Learnings from an AI-First Build:

- AI as a Full-Stack Developer: With clear architecture and precise prompting, LLMs can write the vast majority of application code. This is the evolution of "Fluid Dev" where conversational AI becomes the new IDE.

- Modularity is Key (Even for AI): Breaking problems into smaller modules helps both human architect and AI coder.

- System Instructions are Foundational: A detailed upfront system instruction is invaluable, a principle I highlighted in my 15 Power Tips for AI-First Development in AI Studio.

- Human Role Shifts: My primary effort was designing, defining interfaces, prompting, and validating.

- Debugging is Collaborative: Providing errors and context to the AI helps it generate fixes.

This project powerfully demonstrates the "AI-First" paradigm. It's not about using AI as a simple "AI hammer" for trivial tasks (though that has its place), but for constructing more complex, useful applications.

Next Steps for the Clipper:

- Further refining LLM prompts for even more consistent segment extraction.

- Adding more UI/UX polish, perhaps more granular progress indicators.

- Extensive testing with a wider variety of video inputs.

It's exciting to see a tool like this come together, primarily through guided AI generation, showcasing a new era of rapid application development! For more insights into AI-driven development and automation, visit workflows.diy.